Productivity & Automation

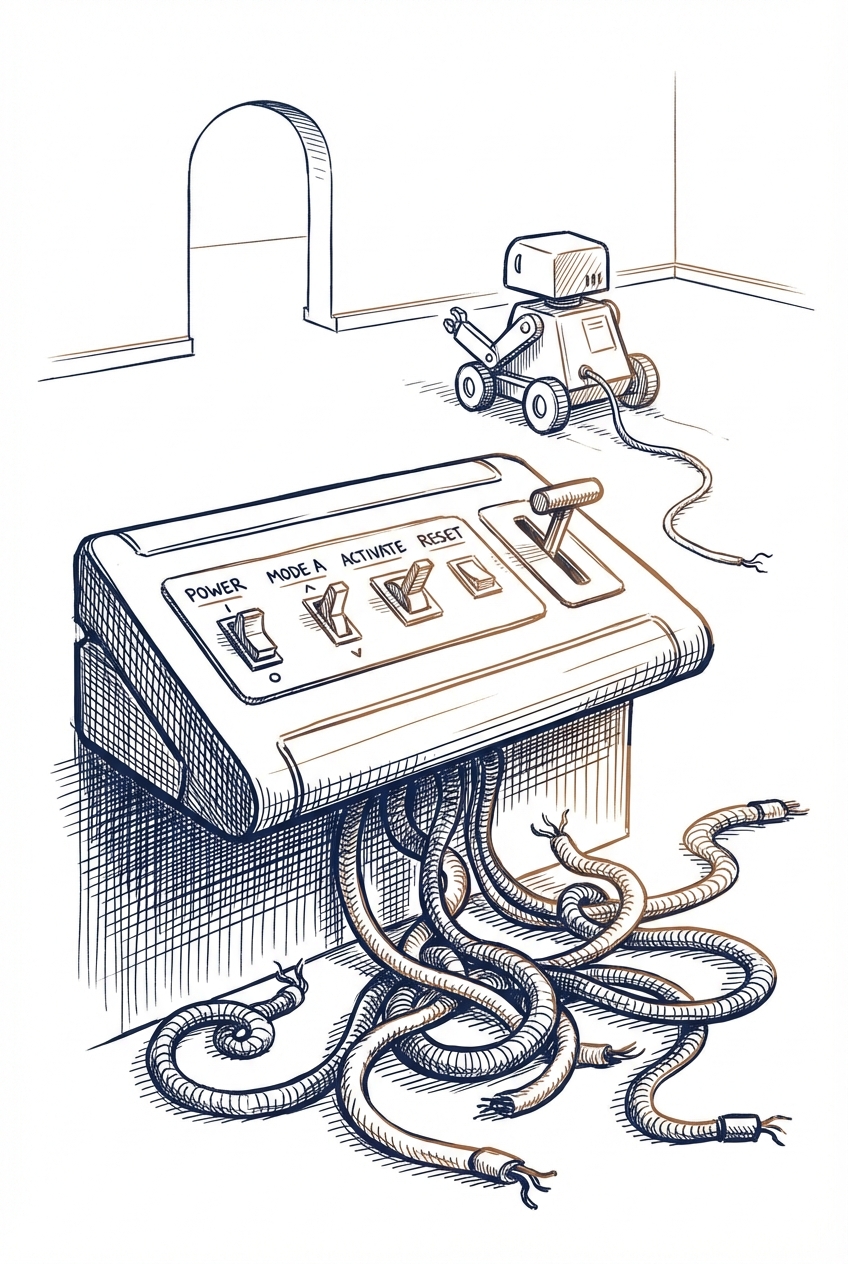

Enterprise AI teams are discovering a critical governance gap when deploying agent-to-agent (A2A) communication systems. While the technology demos well in architecture reviews, production deployments reveal serious oversight challenges—like autonomous agents making unauthorized high-value transactions without clear authorization trails. This highlights the urgent need for governance frameworks before scaling AI agent systems in business environments.

Key Takeaways

- Establish clear authorization protocols before deploying AI agents that can initiate financial transactions or make business decisions

- Implement audit trails and monitoring systems to track which agents are making what decisions, especially during off-hours

- Start with limited-scope agent deployments rather than full automation to identify governance gaps early

Source: O'Reilly Radar

planning

communication

Productivity & Automation

The AI landscape has evolved beyond simple chatbots into specialized agents that can handle complex, multi-step tasks autonomously. Professionals now need to match different AI tools to specific use cases—using frontier models for complex reasoning, specialized agents for routine workflows, and understanding when automation makes sense versus when human oversight is required. This shift requires rethinking how you structure work and which tools you deploy for different business processes.

Key Takeaways

- Evaluate your repetitive workflows to identify where autonomous AI agents can handle multi-step processes without constant supervision

- Match AI capability to task complexity: use advanced models (GPT-4, Claude) for strategic work requiring reasoning, and lighter agents for routine tasks

- Consider building or adopting specialized agents for domain-specific work rather than relying solely on general-purpose chatbots

Source: One Useful Thing

planning

communication

documents

research

Productivity & Automation

AI agent security vulnerabilities expose serious risks when using tools that connect to marketplaces or third-party integrations. Current prompt-based safeguards are insufficient—professionals need proper sandboxing, credential scoping, and logging before deploying agents in business workflows. This affects anyone using AI assistants with tool access or automation capabilities.

Key Takeaways

- Audit your AI agent permissions before connecting them to business tools or data sources—malicious skills can exploit marketplace integrations

- Require sandboxed environments and scoped credentials for any AI agents that access company systems or sensitive information

- Implement logging and monitoring for agent actions to detect unusual behavior or unauthorized access attempts

Source: TLDR AI

planning

code

communication

Productivity & Automation

OpenAI has added Lockdown Mode and "Elevated Risk" labels to ChatGPT to help professionals identify and protect against prompt-injection attacks in sensitive workflows. This security feature is particularly important for users who integrate ChatGPT into business processes where unauthorized data access or manipulation could occur through cleverly crafted prompts.

Key Takeaways

- Enable Lockdown Mode when working with sensitive business data or customer information to reduce prompt-injection vulnerabilities

- Review your current ChatGPT workflows for "Elevated Risk" labels, especially if you're using plugins, file uploads, or code execution features

- Consider restricting ChatGPT integrations in high-security environments until you understand which capabilities carry elevated risk

Source: TLDR AI

documents

code

communication

Productivity & Automation

Anthropic has released Claude Sonnet 4.6, an incremental upgrade to version 4.5 that delivers improved performance across most tasks while maintaining the same pricing and speed. This update offers better output quality for everyday professional workflows, though some edge cases may show mixed results compared to the previous version.

Key Takeaways

- Test Claude Sonnet 4.6 against your current workflows to verify improvements in your specific use cases before fully switching

- Expect better performance in writing, analysis, and coding tasks while maintaining the same cost structure as version 4.5

- Monitor for any regressions in specialized tasks, as the 'mostly better with some caveats' suggests not all scenarios improved uniformly

Source: Latent Space

documents

code

research

communication

Productivity & Automation

Anthropic released Claude Sonnet 4.6, delivering performance comparable to the premium Opus 4.5 model at 40% lower cost ($3/$15 per million tokens vs $5/$25). The new model includes more current knowledge (August 2025 cutoff vs May 2025) and supports up to 1 million tokens in beta, making it a cost-effective upgrade for professionals running high-volume AI workflows.

Key Takeaways

- Switch to Sonnet 4.6 for Opus-level performance at significantly lower cost—particularly valuable for high-volume tasks like document processing or code generation

- Leverage the newer knowledge cutoff (August 2025) for tasks requiring more current information compared to older models

- Test the 1 million token context window in beta for processing large documents, codebases, or comprehensive research materials

Source: Simon Willison's Blog

code

documents

research

Productivity & Automation

Zapier's automation platform enables businesses to instantly respond to Facebook Lead Ads by triggering custom notifications and follow-up actions. This workflow automation eliminates manual monitoring and ensures leads are contacted while their interest is highest, directly addressing the challenge of managing multiple lead sources simultaneously.

Key Takeaways

- Automate immediate follow-up on Facebook Lead Ads using Zapier to capture leads when interest peaks

- Set up custom notification workflows to alert your team instantly across multiple channels (email, Slack, SMS)

- Connect lead data directly to your CRM or email marketing tools to eliminate manual data entry

Source: Zapier AI Blog

communication

email

planning

Productivity & Automation

Zapier's automation workflows (Zaps) can streamline social media management by handling repetitive tasks like posting, responding, and scheduling. For professionals managing business social accounts, this means maintaining consistent presence without manual intervention, freeing time for strategic work while keeping content timely and on-brand.

Key Takeaways

- Automate social media posting schedules to maintain algorithmic visibility without daily manual effort

- Set up automated response workflows to engage followers quickly while maintaining brand voice

- Connect social media accounts with other business tools to create unified content workflows

Source: Zapier AI Blog

communication

planning

Productivity & Automation

Research reveals that AI models split into two distinct types when making decisions under uncertainty: reasoning models (like o1) that behave more rationally and consistently, versus conversational models (like standard ChatGPT) that are less predictable and more influenced by how questions are framed. For professionals, this means the type of AI model you choose significantly impacts decision quality—reasoning models are more reliable for analytical tasks, while conversational models may introd

Key Takeaways

- Choose reasoning-optimized models (o1, o3-mini) over standard conversational models when making decisions involving risk assessment, financial analysis, or strategic planning

- Test your AI workflows for framing sensitivity—conversational models may give different recommendations based on whether information is presented as gains versus losses or in different orders

- Avoid relying on conversational models for consistent decision support across multiple scenarios, as they show significant variability based on how questions are structured

Source: arXiv - Artificial Intelligence

planning

research

spreadsheets

Productivity & Automation

OpenClaw is a customizable AI automation framework that connects multiple AI agents to handle complex business workflows—from CRM management and meeting transcription to content generation and security monitoring. This video demonstrates 21 practical implementations showing how professionals can build their own AI-powered systems that automate routine tasks, maintain institutional knowledge, and coordinate multiple specialized agents working together.

Key Takeaways

- Explore OpenClaw as an alternative to pre-built AI tools—it lets you create custom automation pipelines that connect meeting transcripts, CRM updates, knowledge bases, and content generation into unified workflows

- Consider implementing specialized AI 'councils' (business advisory, security, content strategy) that automatically analyze data and provide structured recommendations without manual prompting

- Review the memory system approach that maintains context across conversations and tasks, enabling AI agents to reference past decisions and accumulated knowledge

Source: Matthew Berman

meetings

documents

planning

communication

Productivity & Automation

This article introduces a framework for improving AI outputs by defining clear success criteria upfront. Instead of iterating blindly, professionals can reverse-engineer their desired outcome into testable criteria that guide AI responses and verify results. This approach transforms vague prompts into structured requests with measurable goals.

Key Takeaways

- Define your end goal before crafting AI prompts by identifying specific, testable criteria for what constitutes a successful output

- Reverse-engineer complex requests by breaking them into discrete, boolean checkpoints that the AI can work toward

- Use the same success criteria for both guiding your initial prompt and verifying the AI's final output

Source: TLDR AI

documents

communication

planning

Productivity & Automation

OpenClaw is a popular open-source AI agent that operates with full user permissions, creating significant security risks for businesses. While its 120,000+ GitHub stars demonstrate strong adoption, its ability to act autonomously on behalf of users requires careful security consideration before workplace deployment. Organizations need to understand the security implications before allowing employees to use this tool.

Key Takeaways

- Evaluate OpenClaw's permission model before deployment, as it operates with the same access rights as the installing user and can take autonomous actions

- Watch Zenity's security webinar to understand specific risks and mitigation strategies for agent-based AI tools in your organization

- Consider establishing security protocols for AI agents before widespread employee adoption, given OpenClaw's popularity and potential for unauthorized actions

Source: TLDR AI

planning

communication

Productivity & Automation

Password managers claiming "zero-knowledge" architecture may still be vulnerable to server compromises that expose vault contents. This security concern is critical for professionals managing credentials for AI tools, API keys, and business accounts. Understanding the actual security model of your password manager affects how you protect sensitive access credentials across your workflow.

Key Takeaways

- Verify your password manager's actual security architecture beyond marketing claims, especially if storing API keys for AI services

- Consider using hardware security keys or local-only password storage for your most critical AI tool credentials

- Review which password manager you use for business accounts and ensure it aligns with your organization's security requirements

Source: Ars Technica

communication

code

documents

Productivity & Automation

Major tech companies including Meta are restricting employee access to OpenClaw, a powerful agentic AI tool, due to security concerns about its unpredictable behavior. While the tool offers advanced autonomous capabilities, security experts warn that its lack of guardrails poses risks for enterprise environments, particularly around data handling and unintended actions.

Key Takeaways

- Evaluate your organization's security policies before deploying agentic AI tools that can take autonomous actions

- Monitor which AI tools your team uses and establish clear guidelines for experimental versus production-ready solutions

- Consider the trade-off between capability and control when selecting AI assistants for sensitive business workflows

Source: Wired - AI

planning

communication

Productivity & Automation

New research addresses a critical problem with AI chatbots and dialogue systems: they frequently generate factually incorrect information that can mislead users. The Fine-Refine framework breaks down AI responses into smaller pieces, verifies each fact against external sources, and corrects errors iteratively—achieving up to 7.63-point improvements in factual accuracy with minimal impact on response quality.

Key Takeaways

- Verify critical information from AI chatbots independently, especially when responses contain multiple factual claims that could impact business decisions

- Expect improved accuracy in future dialogue AI tools as developers adopt fact-checking methods that validate responses at a granular level

- Consider implementing verification workflows for customer-facing chatbots, as current systems may produce misleading information that undermines trust

Source: arXiv - Computation and Language (NLP)

communication

research

Productivity & Automation

Researchers have developed X-MAP, a framework that identifies and explains why spam and phishing detection systems make mistakes. The system can flag potentially misclassified messages with 98% accuracy and recover up to 97% of legitimate emails incorrectly marked as spam, offering businesses a practical way to reduce false positives that damage customer trust and workflow efficiency.

Key Takeaways

- Evaluate your current email security systems for false positive rates—legitimate emails marked as spam can cost you business opportunities and damage customer relationships

- Consider implementing secondary verification layers for spam detection, as this research shows misclassified messages exhibit distinct patterns that can be caught before rejection

- Monitor your spam filter's performance metrics, particularly false rejection rates, as the technology now exists to reduce these errors by over 90%

Source: arXiv - Artificial Intelligence

email

communication

Productivity & Automation

New research reveals that even advanced AI agents (GPT-5-powered) struggle with complex, multi-step research tasks, succeeding only 6.7% of the time and completing just 26.5% of subtasks. The study identifies critical failure patterns including poor time management, overconfidence, and context limitations—issues that directly mirror challenges professionals face when deploying AI agents for complex workflows.

Key Takeaways

- Expect reliability gaps when using AI agents for multi-step projects—even frontier models complete less than 30% of complex subtasks successfully

- Monitor for common failure patterns in your AI workflows: agents rushing through tasks, overcommitting to weak solutions, and losing track of parallel work streams

- Plan for human oversight on long-horizon tasks—AI agents currently lack the patience and resource management needed for end-to-end project completion

Source: arXiv - Artificial Intelligence

research

planning

code

Productivity & Automation

Over-automating customer interactions with AI can damage business relationships and erode loyalty. The article argues for strategic AI implementation that streamlines backend processes while preserving human touchpoints where they matter most to customers.

Key Takeaways

- Evaluate which customer interactions genuinely benefit from automation versus those requiring human connection

- Focus AI implementation on internal processes and operational efficiency rather than replacing all customer-facing roles

- Monitor customer satisfaction metrics after implementing AI touchpoints to identify friction points

Source: Fast Company

communication

planning

Productivity & Automation

Organizations are flattening hierarchies, creating 'supermanagers' who oversee larger teams with fewer middle management layers. This shift increases managerial workload and burnout risk while changing how leadership operates. For professionals using AI, this trend creates opportunities to leverage automation tools for delegation, communication, and workflow management that supermanagers desperately need.

Key Takeaways

- Evaluate AI tools that can handle routine managerial tasks like status updates, meeting summaries, and progress tracking to reduce supermanager workload

- Consider positioning yourself as someone who can work more autonomously using AI assistants, reducing the burden on stretched managers

- Prepare for less direct oversight by building AI-supported systems for self-management, goal tracking, and decision documentation

Source: Fast Company

meetings

communication

planning

documents

Productivity & Automation

The article discusses 'identic AI' - AI agents that can act on your behalf with your identity and authority. As AI agents become more autonomous in handling tasks like scheduling, purchasing, and communications, professionals need to understand the implications for delegation, security, and maintaining control over actions taken in their name.

Key Takeaways

- Prepare for AI agents that will represent you in business interactions, requiring clear boundaries on what decisions they can make autonomously

- Establish verification protocols now for distinguishing between human and AI-generated communications from colleagues and partners

- Consider the liability and security implications of granting AI systems authority to act with your business identity

Source: Harvard Business Review

email

meetings

planning

communication

Productivity & Automation

PersonaPlex is a commercially available real-time voice AI that can listen and speak simultaneously, enabling natural back-and-forth conversations without the typical delays of current voice assistants. Unlike existing tools that wait for you to finish speaking, it processes your speech continuously and can interrupt or respond mid-conversation, making voice interactions feel more human and efficient for business communications.

Key Takeaways

- Evaluate PersonaPlex for customer service applications where natural, interruption-capable voice interactions could improve response times and customer satisfaction

- Consider replacing traditional voice assistants in internal workflows where real-time voice collaboration matters, such as hands-free documentation or meeting facilitation

- Watch for integration opportunities with existing communication platforms, as full-duplex voice AI could transform virtual meetings and voice-based task management

Source: TLDR AI

meetings

communication

Productivity & Automation

Rodney v0.4.0 brings significant improvements to this CLI browser automation tool, making it more practical for professionals who need to automate web-based workflows. The update adds testing capabilities, better session management, and cross-platform support including Windows, enabling more reliable automation of repetitive browser tasks without manual intervention.

Key Takeaways

- Use the new 'rodney assert' command to create automated JavaScript tests for web applications, ensuring your browser automation scripts work reliably before deploying them in production workflows

- Leverage directory-scoped sessions with --local/--global flags to manage multiple automation projects simultaneously without session conflicts

- Try the --show option to make browser windows visible during development, helping you debug and refine automation scripts more efficiently

Source: Simon Willison's Blog

code

research

planning

Productivity & Automation

Research reveals that AI vision-language models can be systematically influenced by subtle visual changes in images—like lighting, composition, or backgrounds—affecting which products they recommend or actions they take. This matters for professionals using AI agents for e-commerce, content curation, or automated decision-making, as these systems may have exploitable visual biases that could impact business outcomes or be manipulated by bad actors.

Key Takeaways

- Audit AI-generated recommendations if you use vision-based agents for product selection, content curation, or purchasing decisions—they may be influenced by visual presentation rather than actual quality

- Consider testing your product images and marketing materials with multiple variations to understand how AI systems might interpret and rank them differently

- Watch for potential manipulation risks if competitors or malicious actors could optimize images specifically to influence AI agent decisions in your market

Source: arXiv - Computer Vision

research

planning

Productivity & Automation

New research introduces Mnemis, a memory system that helps AI chatbots better recall and use information from long conversation histories by combining quick similarity search with structured, hierarchical memory organization. This dual approach significantly improves AI assistants' ability to maintain context over extended interactions, achieving over 90% accuracy on long-term memory benchmarks. For professionals using AI tools daily, this suggests future chatbots will better remember project de

Key Takeaways

- Expect next-generation AI assistants to maintain better context across long projects and multiple conversation sessions without losing track of earlier discussions

- Consider how improved long-term memory could enable AI tools to serve as more reliable project companions that remember your preferences, past decisions, and ongoing work

- Watch for AI tools that can both quickly find relevant past information and comprehensively review entire project histories when needed

Source: arXiv - Computation and Language (NLP)

communication

documents

planning

Productivity & Automation

RUVA introduces a transparent alternative to current AI assistants by using knowledge graphs instead of vector databases, allowing users to see exactly what their AI knows and delete specific information permanently. Unlike traditional RAG systems where deleted data leaves probabilistic traces, RUVA enables precise fact removal—critical for professionals handling sensitive business information. This "glass box" approach puts users in control of their AI's memory, addressing privacy and accountab

Key Takeaways

- Evaluate whether your current AI tools allow you to inspect and verify what data they're using when generating responses—transparency matters for business-critical decisions

- Consider knowledge graph-based AI systems when handling sensitive client or proprietary information that may need complete removal

- Watch for emerging "glass box" AI architectures that offer auditable reasoning trails, especially if your industry has compliance requirements

Source: arXiv - Artificial Intelligence

documents

research

communication

Productivity & Automation

Manus AI's launch of a 24/7 autonomous agent through Telegram was immediately suspended by the platform without explanation, highlighting the risks of building AI workflows on third-party messaging platforms. This incident underscores the importance of platform dependency considerations when deploying AI agents for business operations, as sudden suspensions can disrupt critical workflows without warning or recourse.

Key Takeaways

- Evaluate platform risk before deploying AI agents on third-party services like Telegram, Slack, or WhatsApp that can suspend access without notice

- Consider self-hosted or enterprise-grade solutions for mission-critical AI automation to maintain control and avoid unexpected disruptions

- Develop contingency plans for AI agent workflows that include backup communication channels or alternative deployment methods

Source: TLDR AI

communication

planning

Productivity & Automation

Both Anthropic and OpenAI have released faster inference modes for their LLMs, but with different trade-offs. OpenAI achieves 1,000+ tokens per second using specialized Cerebras chips but with reduced model capability, while Anthropic offers 2.5x speed improvements on full-capability models through optimized batch processing. For professionals, this means choosing between raw speed with limitations or moderate speed gains with full model performance.

Key Takeaways

- Evaluate whether Anthropic's 2.5x speed boost meets your needs before considering OpenAI's faster but less capable option

- Consider Anthropic's fast mode for time-sensitive tasks where you still need full model reasoning capabilities

- Monitor your current token usage patterns to determine if speed improvements would meaningfully impact your workflow

Source: TLDR AI

documents

research

communication

Productivity & Automation

Researchers have developed a new framework for automating customer service that uses flowcharts instead of complex AI orchestration systems, allowing businesses to deploy smaller language models locally while maintaining privacy. The approach converts service dialogues into structured flowcharts that guide AI responses, making it easier for companies to build automated support systems without extensive technical infrastructure or exposing customer data to external services.

Key Takeaways

- Consider local deployment of smaller AI models for customer service to maintain data privacy and reduce costs compared to cloud-based solutions

- Explore flowchart-based automation frameworks that can learn from existing service dialogues without requiring extensive manual configuration

- Evaluate whether your customer service workflows can be structured into repeatable processes that AI can follow with minimal orchestration

Source: arXiv - Computation and Language (NLP)

communication

planning

Productivity & Automation

New research reveals that AI agents struggle with poorly documented tools and APIs—a common real-world scenario. A proposed solution called ToolObserver helps AI systems learn tool behavior through trial and error, improving performance while using significantly fewer resources than current methods. This addresses a critical gap between research benchmarks and the messy, underspecified tools professionals encounter daily.

Key Takeaways

- Expect AI agents to struggle with vague or poorly documented APIs and tools—current systems assume perfect documentation that rarely exists in practice

- Consider that AI tool performance may improve over time as systems learn from failed attempts and execution feedback, rather than requiring perfect upfront documentation

- Watch for emerging solutions that help AI agents adapt to underspecified tools through interaction, potentially reducing the need for extensive manual documentation

Source: arXiv - Computation and Language (NLP)

code

planning

Productivity & Automation

Researchers have developed WAC, a more reliable web automation agent that simulates action consequences before executing them, reducing costly mistakes in automated workflows. The system uses multiple AI models working together—one to suggest actions, another to predict outcomes, and a third to catch potential problems—achieving modest but meaningful improvements in task completion rates. This represents progress toward more trustworthy AI agents that can handle complex web-based tasks without c

Key Takeaways

- Expect more reliable web automation tools that preview action consequences before executing, reducing workflow disruptions from AI mistakes

- Watch for AI agent tools that use multi-model collaboration to cross-check decisions, particularly for high-stakes tasks like data entry or form submissions

- Consider the risk-awareness capabilities when evaluating automation tools—systems that can identify and flag risky actions before executing them will save time and prevent errors

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

New research introduces a framework for AI agents to break down complex tasks and intelligently delegate work to other AI agents or humans. This advancement could enable more sophisticated multi-agent workflows where AI systems coordinate with each other and human team members to tackle ambitious projects that single AI tools can't handle alone.

Key Takeaways

- Anticipate AI tools that can automatically break down your complex requests into smaller tasks and route them appropriately

- Consider how delegation frameworks could enable AI assistants to coordinate with multiple specialized tools in your workflow

- Watch for emerging platforms that allow AI agents to collaborate with both other AIs and human team members on multi-step projects

Source: TLDR AI

planning

communication

Productivity & Automation

OpenClaw's creator is joining OpenAI while keeping the agent platform open-source and independent under a new foundation. This move will give OpenClaw access to OpenAI's latest models and resources, potentially improving the quality and capabilities of accessible AI agents for business users. The platform will maintain community ownership and data control while benefiting from enterprise-grade infrastructure.

Key Takeaways

- Monitor OpenClaw's development as it gains access to OpenAI's advanced models, which may offer more capable agent solutions for workflow automation

- Consider OpenClaw for agent-based automation projects if you prioritize open-source tools and data ownership over proprietary solutions

- Watch for new features and integrations as the foundation structure may accelerate development of practical business applications

Source: TLDR AI

planning

communication