Productivity & Automation

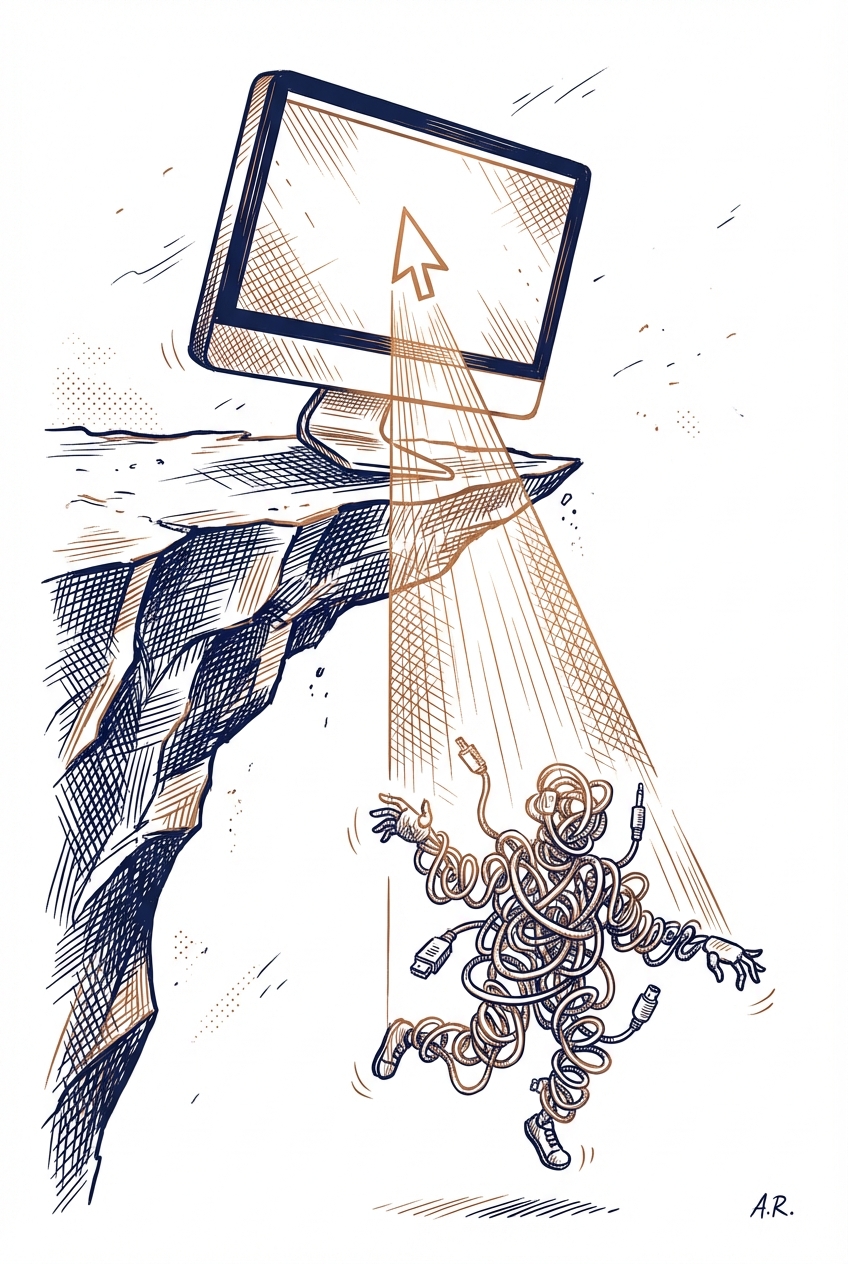

MIT research suggests that heavy reliance on AI tools may reduce our ability to perform cognitive tasks independently, similar to building up a tolerance. This raises important questions about how professionals should balance AI assistance with maintaining their own critical thinking and creative capabilities in daily workflows.

Key Takeaways

- Monitor your dependency on AI for core skills—if tasks feel harder without AI assistance, you may be over-relying on it

- Alternate between AI-assisted and manual work to maintain your independent problem-solving abilities

- Use AI as a collaborative tool rather than a replacement for thinking through complex challenges yourself

Source: Matt Wolfe (YouTube)

planning

research

documents

communication

Productivity & Automation

OpenClaw is a new AI agent that can autonomously control your computer to complete complex tasks like booking travel, managing emails, and contacting vendors. This represents a significant shift from traditional AI assistants that require human oversight to autonomous agents that can execute multi-step workflows independently, though this raises important security considerations about granting AI access to your systems.

Key Takeaways

- Evaluate whether autonomous AI agents like OpenClaw could replace repetitive multi-step tasks in your workflow, such as vendor communications or travel arrangements

- Consider the security implications before granting any AI agent direct access to your computer, email, or business systems

- Monitor this emerging category of 'computer-using agents' as they may fundamentally change how professionals delegate administrative tasks

Source: Bloomberg Technology

email

planning

communication

Productivity & Automation

AI agents are evolving beyond simple task automation to handle complex workflow orchestration like schedule management and email processing. These tools can follow rules, maintain context across interactions, make goal-oriented decisions, and in some cases improve their performance over time. For professionals, this means delegating entire workflow sequences rather than just individual tasks.

Key Takeaways

- Explore AI agents for managing repetitive administrative tasks like email triage and calendar coordination that currently consume significant time

- Consider implementing rule-based AI agents for workflows that require consistent decision-making across multiple steps

- Evaluate agents that maintain context across interactions to handle complex, multi-stage processes without constant human intervention

Source: Zapier AI Blog

email

planning

communication

Productivity & Automation

As AI tools become commoditized with similar default capabilities, professionals have a limited window to build competitive advantages through intentional, customized AI implementation. The article warns that relying on out-of-the-box AI solutions without developing proprietary workflows, data advantages, or specialized expertise will leave businesses undifferentiated as competitors adopt the same tools.

Key Takeaways

- Develop proprietary workflows and processes around AI tools rather than relying on default settings that competitors can easily replicate

- Build domain-specific knowledge bases and custom training data to create AI outputs unique to your business context

- Invest time now in learning advanced AI features and integration techniques before the competitive window closes

Source: TLDR AI

planning

documents

research

Productivity & Automation

Wispr Flow is a voice-to-text tool that claims to be 4x faster than typing for AI prompts, working system-wide across ChatGPT, Claude, Cursor, and other AI tools. The tool converts spoken input into formatted text with 89% requiring no edits, potentially accelerating prompt engineering and reducing the friction of providing detailed context to AI assistants.

Key Takeaways

- Consider voice dictation for complex prompts that require extensive context or detailed instructions to save time

- Test Wispr Flow's free version across your primary AI tools (ChatGPT, Claude, Cursor) to evaluate speed gains in your workflow

- Leverage faster input methods to provide richer context to AI tools, potentially improving output quality

Source: TLDR AI

code

documents

communication

Productivity & Automation

Research reveals that less accurate AI models are significantly more overconfident in their responses—similar to the human Dunning-Kruger effect. Claude Haiku 4.5 showed the best balance of accuracy and appropriate confidence, while Kimi K2 was highly overconfident despite poor performance. This matters for professionals who rely on AI confidence scores to make decisions in their work.

Key Takeaways

- Verify AI outputs independently rather than trusting confidence scores alone, especially when using less established models

- Consider Claude Haiku 4.5 for tasks where accurate confidence assessment is critical to your workflow

- Watch for overconfident responses in AI tools—they may indicate lower underlying accuracy rather than certainty

Source: arXiv - Computation and Language (NLP)

research

documents

planning

Productivity & Automation

Organizations are failing to scale AI adoption not due to technical limitations, but because their AI tools aren't designed with user experience in mind. McKinsey argues that successful AI implementation requires focusing on how people actually work and designing tools they'll naturally embrace, rather than forcing technical solutions into existing workflows.

Key Takeaways

- Evaluate your current AI tools through a user experience lens—if adoption is low, the problem is likely design and integration, not capability

- Focus on experiential design when selecting or building AI solutions: prioritize tools that fit naturally into existing workflows rather than requiring process changes

- Consider piloting AI implementations with small teams to identify friction points before scaling across your organization

Source: McKinsey Insights

planning

communication

Productivity & Automation

While ChatGPT dominates the AI chatbot market, specialized alternatives may better serve specific professional workflows. This article identifies 8 ChatGPT alternatives that professionals should evaluate based on their particular use cases, as general-purpose tools often underperform compared to specialized solutions for specific tasks.

Key Takeaways

- Evaluate specialized AI chatbots for your specific workflow needs rather than defaulting to ChatGPT for all tasks

- Consider testing alternative tools if you've noticed ChatGPT's limitations in your particular use case

- Match AI tools to specific job functions rather than relying on a single general-purpose solution

Source: Zapier AI Blog

communication

research

documents

Productivity & Automation

Visualping offers AI-powered website monitoring that tracks competitor pricing, product updates, and policy changes as frequently as every two minutes, with automated summaries explaining what changed and why it matters. The platform integrates with Zapier to automate workflows, eliminating the need to manually check websites for critical business intelligence.

Key Takeaways

- Monitor competitor pricing and product changes automatically without dedicating staff time to manual website checks

- Set up automated alerts for legal policy updates, terms of service changes, and compliance-related content on vendor websites

- Use AI-generated summaries to quickly understand what changed on monitored pages and assess business impact

Source: Zapier AI Blog

research

communication

planning

Productivity & Automation

Microsoft's Copilot Cowork represents a significant evolution in workplace automation, moving beyond single-task assistance to coordinating multiple actions across your entire Microsoft 365 environment. The system can autonomously handle complex workflows like rescheduling meetings while preparing related documents, though it remains in research preview until March 2026. For professionals already invested in the Microsoft ecosystem, this signals a shift toward AI agents that manage interconnecte

Key Takeaways

- Monitor the March 2026 timeline if you're planning Microsoft 365 workflow improvements, as Copilot Cowork could eliminate manual coordination between emails, meetings, and documents

- Evaluate your current cross-application workflows to identify repetitive task sequences that this automation could handle, such as meeting prep or follow-up documentation

- Consider the control mechanisms you'll need when AI manages multi-step processes autonomously, ensuring oversight without losing productivity gains

Source: TLDR AI

meetings

email

documents

planning

Productivity & Automation

When deploying AI for customer interactions, the tone and voice of your AI matters as much as the accuracy of its responses. Companies need to deliberately design how their AI communicates—whether formal or casual, empathetic or efficient—to align with brand identity and customer expectations. This decision affects customer satisfaction and brand perception across chatbots, email automation, and voice assistants.

Key Takeaways

- Define your AI's communication style before deployment to ensure consistency with your brand voice across all customer touchpoints

- Test different tones with actual customers to measure impact on satisfaction and trust, rather than assuming what works best

- Consider context-specific tone adjustments—customer service AI may need different warmth levels than sales or technical support interactions

Source: Harvard Business Review

communication

email

Productivity & Automation

The AI tool landscape is rapidly diversifying beyond ChatGPT, with established productivity apps like CapCut, Canva, and Notion embedding AI as core features rather than standalone products. For professionals, this signals a shift toward choosing integrated AI capabilities within existing workflow tools rather than relying solely on separate AI assistants, particularly as video generation and agentic AI capabilities mature.

Key Takeaways

- Evaluate your current productivity tools for built-in AI features before adding separate AI subscriptions—apps like Canva and Notion now offer integrated capabilities

- Monitor emerging competitors like Gemini and Claude for paid subscriptions, as growing competition may drive better pricing and features for business users

- Explore video generation tools as they become more mainstream in consumer apps, potentially streamlining content creation workflows

Source: TLDR AI

documents

design

planning

Productivity & Automation

OpenAI has implemented security measures in ChatGPT to prevent prompt injection attacks and social engineering attempts when using AI agents. These protections constrain risky actions and safeguard sensitive data, making agent-based workflows more secure for business use. Understanding these defenses helps professionals evaluate the safety of deploying AI agents in their operations.

Key Takeaways

- Evaluate your current AI agent implementations for prompt injection vulnerabilities, especially if they handle sensitive business data or have access to external systems

- Consider using ChatGPT's built-in agent protections when deploying automated workflows that interact with customers or process confidential information

- Review your AI usage policies to account for social engineering risks, particularly when agents have decision-making authority or data access

Source: OpenAI Blog

planning

communication

documents

Productivity & Automation

As AI systems become more autonomous, organizations face a critical architectural decision: whether every AI action requires real-time approval or if some can operate with asynchronous oversight. This trade-off between safety controls and operational speed will directly impact how you deploy AI agents and automation in your workflows.

Key Takeaways

- Evaluate which AI tasks in your workflow truly require human approval before execution versus those that can be reviewed after the fact

- Consider implementing tiered governance where low-risk AI actions (like drafting emails) run freely while high-stakes decisions (like customer commitments) require pre-approval

- Prepare for performance trade-offs when adding safety controls to autonomous AI tools, as synchronous validation will slow down task completion

Source: O'Reilly Radar

planning

communication

Productivity & Automation

Google's new Workspace CLI enables AI agents to directly interact with your Gmail, Docs, and Calendar through command-line interfaces, making it easier to automate routine business tasks. This shift toward agent-friendly tools signals that major platforms are preparing for AI assistants to handle more of your daily workflow operations, potentially changing how you'll interact with productivity software in the near future.

Key Takeaways

- Monitor how Google Workspace CLI evolves—it may soon enable AI agents to manage your emails, schedule meetings, and update documents automatically without manual intervention

- Consider how command-line accessibility for agents could affect your workflow automation strategy, especially if you're already using tools like Zapier or custom scripts

- Watch for similar agent-friendly interfaces from Microsoft and other enterprise platforms as they compete to become the preferred ecosystem for AI automation

Source: AI Breakdown

email

documents

meetings

planning

Productivity & Automation

Pathway is building AI systems that work with live, continuously updating data rather than static datasets that quickly become outdated. This addresses a critical limitation in current AI applications where models, RAG systems, and knowledge bases operate on stale information, potentially improving accuracy and relevance for enterprise workflows that depend on current data.

Key Takeaways

- Evaluate whether your AI applications suffer from outdated information—most current systems rely on static datasets that don't reflect real-time changes in your business data

- Consider real-time data processing for RAG systems and knowledge bases if your work requires up-to-date information from continuously changing sources

- Watch for emerging tools that enable AI agents to maintain current context rather than resetting with each interaction, improving continuity in complex workflows

Source: Eye on AI

research

documents

planning

Productivity & Automation

New research explains why prompt engineering techniques like Chain-of-Thought and few-shot examples actually work, providing a theoretical foundation for what many professionals already observe in practice. Understanding these mechanisms can help you craft more effective prompts by reducing ambiguity and breaking complex tasks into simpler steps that align with how LLMs process information.

Key Takeaways

- Structure prompts to minimize ambiguity—clearer task definitions help the model concentrate on your intended outcome rather than guessing between multiple interpretations

- Use Chain-of-Thought prompting for complex multi-step problems by breaking them into sequential sub-tasks the model already knows how to handle

- Leverage few-shot examples (In-Context Learning) to clarify your intent and reduce confusion about what you're asking the model to do

Source: arXiv - Computation and Language (NLP)

documents

research

communication

Productivity & Automation

OpenAI has released a training dataset that significantly improves how AI models handle conflicting instructions from different sources (system prompts, users, tools). This advancement makes AI assistants more secure against prompt injection attacks and jailbreaks while maintaining helpfulness—critical for professionals relying on AI tools in sensitive business contexts.

Key Takeaways

- Expect improved security in AI tools as models become better at prioritizing legitimate instructions over malicious prompt injections

- Understand that AI assistants will become more reliable at following your intended instructions even when processing untrusted content or third-party tool outputs

- Monitor your AI workflows for reduced instances of unexpected behavior when using agents or tools that interact with external data sources

Source: arXiv - Artificial Intelligence

documents

research

communication

Productivity & Automation

Perplexity has launched a competitive response to OpenAI's capabilities, while Google Workspace Studio now enables users to create agentic workflows directly within their productivity suite. These developments signal increased competition in AI tooling and expanded automation options for business users working within familiar platforms.

Key Takeaways

- Evaluate Perplexity's new features as an alternative to OpenAI tools for research and information retrieval tasks

- Explore Google Workspace Studio's agentic workflow capabilities to automate repetitive tasks across Docs, Sheets, and Gmail

- Monitor how competition between AI providers may lead to better pricing or feature sets for business users

Source: The Rundown AI

documents

email

research

planning

Productivity & Automation

OpenClaw, an open-source AI tool that autonomously controls devices to complete tasks, is sparking entrepreneurial activity in China. The emergence of accessible autonomous agent technology signals a shift toward AI tools that can handle multi-step workflows independently, potentially transforming how professionals delegate routine computer-based tasks.

Key Takeaways

- Monitor OpenClaw and similar autonomous agent tools as they mature—these represent the next evolution beyond chatbots for automating multi-step workflows

- Consider how device-controlling AI agents could automate repetitive computer tasks in your workflow, from data entry to report generation

- Watch for commercial applications emerging from open-source autonomous agents, as entrepreneurs build business-ready versions with support and reliability

Source: MIT Technology Review

planning

code

Productivity & Automation

NVIDIA's new Nemotron 3 Super model delivers 5x faster performance for AI agents that can autonomously complete complex tasks. This open-source model is now available through platforms like Perplexity, making advanced agentic AI more accessible for business automation workflows. The efficiency gains mean AI agents can handle more sophisticated multi-step tasks without proportional increases in cost or latency.

Key Takeaways

- Explore agentic AI platforms like Perplexity that now offer Nemotron 3 Super to automate complex multi-step workflows in your business

- Consider testing AI agents for tasks requiring reasoning across multiple steps, as the 5x throughput improvement makes these applications more cost-effective

- Watch for integration opportunities where autonomous agents could replace manual processes that currently require multiple tool switches

Source: NVIDIA AI Blog

planning

research

communication

Productivity & Automation

OpenAI has released technical infrastructure that transforms their API from simple question-answer interactions into full agents capable of executing tasks in secure container environments. This enables AI to autonomously run code, manage files, and maintain state across complex workflows—moving beyond chat responses to actual task completion. For professionals, this signals a shift toward AI systems that can handle multi-step processes independently rather than requiring constant human guidance

Key Takeaways

- Evaluate whether your current AI workflows could benefit from autonomous task execution rather than simple Q&A interactions

- Consider how agent-based systems could automate multi-step processes in your work that currently require manual oversight between AI queries

- Watch for new tools and platforms built on this infrastructure that may offer more sophisticated automation capabilities

Source: OpenAI Blog

code

planning

documents

Productivity & Automation

Researchers have developed a new way to evaluate AI agents that perform computer tasks by analyzing video recordings of their actions, rather than examining their internal code. This breakthrough could lead to more reliable AI assistants that can autonomously complete multi-step computer tasks across different operating systems, with better verification that they actually accomplished what you asked.

Key Takeaways

- Watch for AI automation tools that can verify task completion across Windows, macOS, and Android without requiring specific integration with each platform

- Consider that future AI assistants may be evaluated based on visual confirmation of completed tasks, making them more trustworthy for delegating complex workflows

- Expect improvements in AI agents that can handle multi-step computer tasks with better accuracy verification (84.7% success rate demonstrated)

Source: arXiv - Computer Vision

planning

code

Productivity & Automation

Research comparing GPT-4o, o4-mini, and GPT-5-mini found empathy levels remained statistically unchanged across models, but safety behaviors shifted significantly: newer models detect crises better but sometimes over-respond with excessive caution. For professionals using AI in sensitive communications—customer support, HR, coaching—this means understanding that perceived personality changes are actually safety trade-offs that affect how models handle emotionally charged situations.

Key Takeaways

- Recognize that perceived 'empathy loss' in newer AI models is actually a shift in safety posture, not emotional capability—adjust your expectations accordingly when upgrading models

- Test AI responses in mid-conversation crisis scenarios if you use chatbots for customer support, mental health resources, or sensitive communications—aggregate testing misses critical behavioral shifts

- Consider keeping access to multiple model versions for different use cases: older models for general empathetic tone, newer ones where crisis detection matters

Source: arXiv - Computation and Language (NLP)

communication

research

Productivity & Automation

Research shows that adding human feedback to AI-powered interview preparation delivers better results than purely automated approaches, requiring 5x fewer iterations while significantly improving candidate confidence and authenticity. The study reveals that AI interview tools hit limitations based on available context rather than computational power, suggesting that hybrid human-AI workflows outperform fully automated solutions for complex evaluation tasks.

Key Takeaways

- Consider implementing human-in-the-loop workflows when using AI for complex evaluations or training scenarios—hybrid approaches require fewer iterations and produce more authentic results than pure automation

- Recognize that AI tools for interview prep or performance evaluation may plateau quickly due to context limitations, not processing power—adding more prompts won't necessarily improve output quality

- Expect diminishing returns after initial AI iterations in evaluation tasks—the first round of AI feedback provides the most value, with subsequent automated refinements offering minimal improvement

Source: arXiv - Computation and Language (NLP)

communication

planning

Productivity & Automation

Researchers have developed a simple, user-focused rating system (SHS) to measure how often AI models hallucinate or produce unreliable information from a real user's perspective. Unlike automated detection tools, this 10-question survey helps organizations systematically evaluate whether their AI systems are producing factual, coherent responses during actual work scenarios. The validated instrument provides a standardized way to compare different AI tools and track improvements over time.

Key Takeaways

- Consider implementing systematic hallucination assessments when evaluating AI tools for your team, using user-perspective surveys rather than relying solely on vendor claims

- Track hallucination patterns in your AI workflows by periodically surveying team members about factual accuracy, coherence, and misleading outputs in their daily interactions

- Compare AI tools more objectively by using standardized measurement approaches that capture real-world user experiences rather than just technical benchmarks

Source: arXiv - Computation and Language (NLP)

research

documents

communication

Productivity & Automation

Researchers have developed a system that allows AI agents to learn from their past mistakes and successes, automatically extracting lessons from previous task executions to improve future performance. This framework analyzes what went wrong or right in agent workflows, then retrieves relevant guidance when facing similar situations—showing up to 149% improvement on complex tasks in testing.

Key Takeaways

- Expect future AI agent tools to become significantly smarter over time by learning from their execution history rather than repeating the same mistakes

- Watch for AI assistants that can recognize when they've encountered similar problems before and apply previously successful recovery strategies

- Consider that complex, multi-step workflows will benefit most from these self-improving agents, with research showing nearly 3x better performance on difficult tasks

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

Researchers have developed a new memory system that helps AI agents navigate computer interfaces more effectively by mimicking how human memory works. This breakthrough enables smaller, open-source AI models to perform computer tasks as well as premium services like GPT-4o, potentially reducing costs for businesses automating repetitive computer workflows. The technology shows particular promise for AI agents that need to complete multi-step tasks across different applications.

Key Takeaways

- Monitor emerging AI agent tools that incorporate this memory approach, as they may offer enterprise-grade automation at lower costs than current premium solutions

- Consider how improved GUI agents could automate repetitive multi-step workflows in your business, such as data entry across multiple systems or routine software testing

- Evaluate open-source AI agent solutions more seriously, as this research demonstrates they can now match closed-source alternatives for computer automation tasks

Source: arXiv - Artificial Intelligence

planning

code

Productivity & Automation

Replit Agent 4 represents an evolution in AI-powered development agents that can handle knowledge work tasks beyond just coding. This release signals a shift toward AI agents that can manage broader professional workflows, potentially automating more complex, multi-step tasks that previously required human oversight.

Key Takeaways

- Monitor Replit Agent 4's capabilities for automating knowledge work tasks that extend beyond traditional coding assistance

- Consider how AI agents are evolving from single-task tools to workflow orchestrators that can handle multi-step professional projects

- Evaluate whether agent-based tools like Replit could replace or augment current workflows for documentation, planning, and project management

Source: Latent Space

code

documents

planning

Productivity & Automation

Legendary programmer John Carmack warns against over-engineering solutions for hypothetical future needs—a principle directly applicable to AI tool implementation. When integrating AI into workflows, focus on solving current, concrete problems rather than building elaborate systems for imagined future scenarios. This YAGNI (You Aren't Gonna Need It) principle helps avoid wasted time and complexity in AI adoption.

Key Takeaways

- Start with AI tools that solve immediate, specific problems in your current workflow rather than building comprehensive systems for potential future needs

- Resist the temptation to create complex AI automation frameworks before you've validated simpler, focused solutions

- Evaluate AI tool purchases and implementations based on present-day use cases, not speculative future applications

Source: Simon Willison's Blog

planning

code

Productivity & Automation

This marketing principle about focused messaging applies directly to AI tool selection and prompt engineering. When evaluating AI solutions or crafting prompts, professionals should prioritize tools and requests that promise one clear outcome rather than those claiming to do everything—single-purpose focus typically delivers more reliable results.

Key Takeaways

- Evaluate AI tools based on their primary strength rather than feature lists—specialized tools often outperform all-in-one solutions for specific tasks

- Structure prompts with one clear objective instead of multiple competing goals to improve output quality and consistency

- Consider splitting complex AI workflows into single-purpose steps rather than asking one tool to handle everything at once

Source: HubSpot Marketing Blog

planning

communication

Productivity & Automation

PersonaPlex enables professionals to run real-time, interruptible speech-to-speech AI conversations directly on their local machines without cloud dependencies. This technology allows for natural voice interactions where you can interrupt the AI mid-response, similar to human conversation, while maintaining complete data privacy through local processing.

Key Takeaways

- Install PersonaPlex locally to test real-time voice AI capabilities without sending audio data to external servers

- Evaluate interruptible speech technology for customer service applications, virtual assistants, or accessibility tools in your workflow

- Consider local speech-to-speech models for sensitive business conversations where cloud-based solutions pose privacy concerns

Source: KDnuggets

communication

meetings

documents

Productivity & Automation

This research introduces a new approach to building AI agents that grow through ongoing conversations rather than upfront programming. Instead of coding all expertise into an agent before deployment, professionals can start with a basic agent and develop it incrementally through daily interactions, with the system automatically capturing and organizing useful knowledge patterns over time.

Key Takeaways

- Consider starting with simpler AI agents and developing them through actual use rather than trying to build comprehensive systems upfront

- Track recurring patterns in your AI conversations that could be formalized into reusable workflows or templates

- Expect future AI tools that learn from your working style through interaction rather than requiring extensive initial configuration

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

Researchers tested whether AI vision models can reliably evaluate computer-use agents (AI that controls your desktop) and found significant limitations. While these AI auditors show promise, they struggle with complex tasks and often disagree with each other, meaning organizations deploying autonomous desktop agents can't yet fully trust automated quality checks without human oversight.

Key Takeaways

- Approach automated agent deployments cautiously—current AI evaluation systems show notable performance drops in complex or varied desktop environments

- Plan for human oversight when implementing computer-use agents, as even advanced AI auditors disagree significantly on whether tasks were completed successfully

- Consider the reliability gap before scaling autonomous desktop agents across your organization, especially for critical workflows

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

The article explores how constant digital distraction prevents deep self-reflection and awareness. For professionals relying on AI tools, this raises questions about whether automation and always-on productivity systems may be preventing the quiet thinking time needed for strategic decision-making and creative problem-solving.

Key Takeaways

- Schedule deliberate breaks from AI-assisted workflows to allow for unstructured thinking and reflection

- Recognize when using productivity tools becomes a form of productive procrastination that avoids deeper work

- Consider whether constant AI assistance is preventing you from developing independent problem-solving skills

Source: Fast Company

planning

meetings

Productivity & Automation

RevOps tools are evolving to help businesses align sales, marketing, and customer success teams through shared data and automation. For professionals managing cross-functional workflows, modern RevOps platforms now offer practical solutions to reduce data silos and improve team coordination. This matters if you're struggling to connect customer data across different departments or looking to streamline revenue-generating processes.

Key Takeaways

- Evaluate RevOps tools if your teams are working from disconnected data sources across sales, marketing, and customer success

- Consider implementing RevOps automation to reduce manual data entry and improve cross-team visibility into customer interactions

- Look for platforms that integrate with your existing CRM and marketing tools to create a unified view of revenue operations

Source: Zapier AI Blog

planning

communication

Productivity & Automation

Zapier's 2026 roundup of social media management tools highlights how AI integration has become standard across platforms for scheduling, content creation, and analytics. For professionals managing business social presence, these tools now offer AI-powered features to streamline multi-platform posting and engagement tracking, making social media management more efficient alongside other daily workflows.

Key Takeaways

- Evaluate social media management tools with built-in AI features to consolidate your posting workflow across multiple platforms

- Consider automation capabilities that connect social media scheduling with your existing business tools and CRM systems

- Look for analytics features that provide actionable insights on engagement patterns to optimize posting times and content strategy

Source: Zapier AI Blog

communication

planning