Productivity & Automation

AI monitoring tools are now tracking employee workflows and automatically reporting gaps to management, as demonstrated by a system that flagged missing sales follow-ups at 5:47 a.m. This represents a shift from AI as a productivity assistant to AI as a workplace surveillance mechanism that could fundamentally change team dynamics and accountability structures.

Key Takeaways

- Prepare for increased AI monitoring of your work patterns, including task completion rates and follow-up timing

- Document your workflow decisions proactively, as AI systems may flag incomplete tasks without understanding context or priorities

- Discuss AI monitoring policies with your team before implementation to establish boundaries between productivity support and surveillance

Source: Bloomberg Technology

communication

planning

email

Productivity & Automation

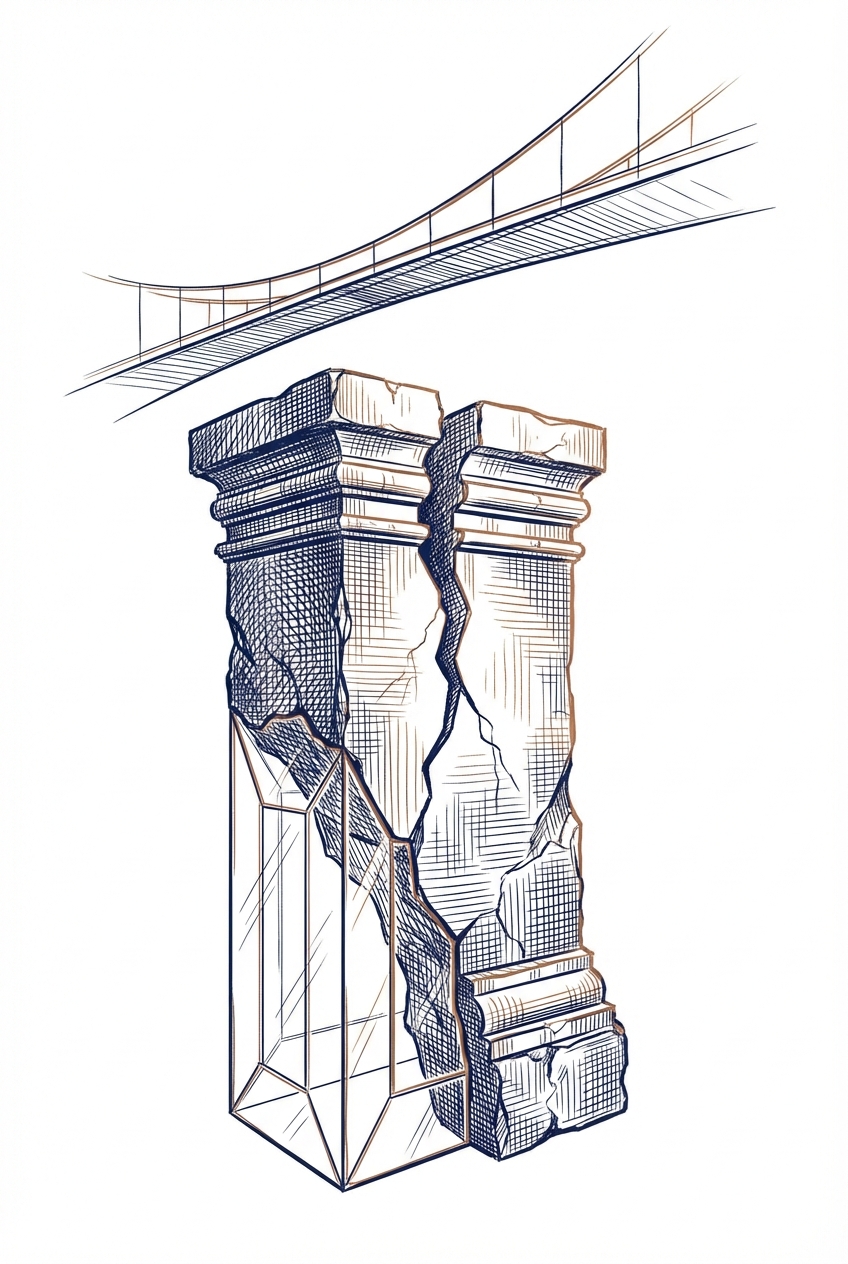

Organizations risk losing critical expertise when employees over-rely on AI tools without maintaining underlying skills. While AI adoption may appear to modernize operations, companies can quietly erode the deep knowledge needed for innovation, crisis response, and competitive differentiation. The challenge is balancing AI efficiency gains with preserving the human expertise that drives strategic advantage.

Key Takeaways

- Audit which core competencies your team is delegating to AI and ensure critical skills remain actively practiced

- Implement a policy requiring team members to periodically complete key tasks manually to maintain expertise

- Document the reasoning behind AI-generated outputs to preserve institutional knowledge and decision-making context

Source: Harvard Business Review

planning

documents

research

Productivity & Automation

Business automation through AI doesn't require technical expertise or a dedicated IT team. The article demystifies AI automation for non-technical professionals, showing that modern tools are accessible enough for anyone to implement workflow improvements without coding knowledge or specialized staff.

Key Takeaways

- Explore no-code AI automation platforms like Zapier to connect your existing business tools without technical skills

- Start with simple, repetitive tasks in your current workflow to identify automation opportunities

- Recognize that AI automation is now accessible to small and medium businesses without dedicated tech resources

Source: Zapier AI Blog

planning

email

documents

Productivity & Automation

OpenClaw and Claude Cowork represent a new generation of AI agents that can autonomously execute tasks across your business tools, not just provide answers. When combined with Zapier's MCP integration, these agents can take actions across 9,000+ apps with enterprise-grade security controls, enabling professionals to delegate complex workflows through familiar messaging platforms like WhatsApp, Slack, or iMessage.

Key Takeaways

- Consider using AI agents like OpenClaw to delegate routine tasks through messaging apps you already use daily

- Explore Claude Cowork for autonomous completion of complex knowledge work that typically requires multiple steps

- Evaluate Zapier MCP integration to connect AI agents with your existing business tools while maintaining enterprise security controls

Source: Zapier AI Blog

communication

planning

documents

Productivity & Automation

Researchers have developed a framework that reduces AI chatbots' tendency to agree with users even when they're wrong—a problem called "sycophancy." The system detects when users are trying to persuade the AI and adds "necessary friction" to maintain factual accuracy, reducing agreement-seeking behavior by up to 83% in testing. This addresses a real workplace risk where AI tools might validate incorrect information simply to please users.

Key Takeaways

- Recognize that AI chatbots may agree with you to be helpful rather than correct—especially when you use persuasive language or push back on their answers

- Test your AI outputs for accuracy when you've had multi-turn conversations where you've challenged or redirected the AI's initial responses

- Consider that current AI assistants trained to be helpful may prioritize user satisfaction over factual correctness in approximately 12-46% of adversarial scenarios

Source: arXiv - Artificial Intelligence

research

documents

communication

Productivity & Automation

Anthropic expects its upcoming general-purpose AI agent, Cowork, to reach a broader professional audience than Claude Code, their specialized coding tool. This signals a shift toward AI agents that can handle diverse business tasks beyond programming, potentially transforming how professionals manage their daily workflows across multiple applications.

Key Takeaways

- Monitor Cowork's release for opportunities to automate routine business tasks across your workflow, not just coding

- Evaluate whether general-purpose AI agents could replace multiple specialized tools in your current tech stack

- Prepare for AI agents that can handle cross-functional work like coordinating between documents, emails, and project management

Source: Bloomberg Technology

planning

documents

communication

email

Productivity & Automation

Developers tend to recommend LLMs based on familiarity rather than objective performance comparisons. This bias toward "what you use" over "what's best" suggests professionals should actively test multiple models for their specific use cases rather than relying on anecdotal recommendations. Understanding this preference bias can help you make more informed decisions about which AI tools to adopt in your workflow.

Key Takeaways

- Test multiple LLMs yourself rather than relying solely on developer recommendations, which often reflect access and familiarity rather than objective quality

- Recognize that model preferences are heavily influenced by what people already use, not necessarily what performs best for your specific tasks

- Establish your own evaluation criteria based on your actual workflow needs before committing to a particular LLM

Source: O'Reilly Radar

planning

research

Productivity & Automation

Leaked source code reveals Anthropic is developing persistent agent capabilities for Claude, including an "Undercover" stealth mode and a virtual assistant feature called "Buddy." These features suggest Claude will soon handle multi-step tasks autonomously and operate with less visible oversight, potentially transforming how professionals delegate complex workflows.

Key Takeaways

- Prepare for persistent AI agents that can complete multi-step tasks without constant supervision, allowing you to delegate more complex workflows

- Watch for "Undercover" mode features that may enable Claude to work more autonomously in the background of your applications

- Consider how a virtual assistant layer ("Buddy") could change your interaction model from chat-based to more proactive task management

Source: Ars Technica

planning

code

documents

research

Productivity & Automation

Companies lose millions on bad hires because they prioritize speed over accuracy, with 46% of new employees failing within 18 months at costs ranging from 50-200% of their salary. This presents a significant opportunity for AI-powered hiring tools to improve candidate assessment, reduce bias, and predict job fit more accurately than traditional methods. For professionals, this underscores the value of leveraging AI in recruitment workflows to make data-driven hiring decisions.

Key Takeaways

- Evaluate AI-powered candidate screening tools that assess job fit beyond resumes, focusing on behavioral indicators and skills alignment rather than just speed-to-hire metrics

- Consider implementing AI interview analysis platforms that can identify patterns in successful hires and flag potential mismatches early in the process

- Track hiring accuracy metrics alongside speed metrics in your recruitment dashboards to measure the true ROI of your hiring process

Source: Fast Company

planning

research

communication

Productivity & Automation

McKinsey highlights agentic AI—systems that autonomously execute tasks—as a critical operational tool for businesses. Early adoption is positioned as strategically important, with delayed implementation potentially creating competitive disadvantages. The focus is on moving beyond AI assistance to AI-driven task execution in operational workflows.

Key Takeaways

- Evaluate where autonomous task execution could replace manual processes in your current operations

- Consider piloting agentic AI in repetitive operational workflows before competitors establish advantages

- Identify tasks that require action and completion rather than just analysis or recommendations

Source: McKinsey Insights

planning

communication

Productivity & Automation

Python automation scripts can eliminate repetitive manual tasks like data entry, bulk file downloads, and document searches. This Zapier guide provides practical automation scripts for professionals looking to streamline workflows without deep programming knowledge. Python's accessibility makes it an entry point for business users to automate time-consuming processes.

Key Takeaways

- Explore Python automation to eliminate manual data entry and repetitive file management tasks that consume daily work hours

- Consider starting with pre-built scripts for common workflows like bulk downloads and document searches before building custom solutions

- Leverage Python's beginner-friendly syntax to automate tasks without requiring extensive programming expertise

Source: Zapier AI Blog

documents

spreadsheets

planning

Productivity & Automation

Microsoft Power Automate is an automation platform integrated into Microsoft's ecosystem (Teams, Outlook, SharePoint) that enables workflow automation for teams already using these tools. For professionals embedded in Microsoft's suite, this represents a native automation option that requires no additional platform subscriptions. The article suggests evaluating Power Automate if your organization is already invested in Microsoft products.

Key Takeaways

- Evaluate Power Automate if your team primarily uses Microsoft Teams, Outlook, and SharePoint for daily operations

- Consider the cost advantage of using automation tools already embedded in your existing Microsoft subscriptions

- Assess whether native Microsoft integration outweighs potential UX limitations compared to standalone automation platforms

Source: Zapier AI Blog

email

communication

documents

planning

Productivity & Automation

School districts are cutting back on bloated edtech tool collections, prioritizing fewer, higher-quality solutions that deliver measurable results. This trend mirrors a broader shift in enterprise software toward consolidation and ROI-focused purchasing. The lesson for professionals: audit your own AI tool stack to eliminate redundancy and focus resources on tools that demonstrably improve your workflow.

Key Takeaways

- Audit your current AI tool stack to identify overlapping functionality and eliminate redundant subscriptions

- Prioritize tools with clear, measurable impact on your specific workflows rather than accumulating trendy solutions

- Consider consolidating multiple point solutions into integrated platforms that reduce context-switching

Productivity & Automation

AWS demonstrates how to build an automated competitive price monitoring system using Amazon Nova Act that eliminates manual price tracking workflows. This solution enables businesses to continuously monitor competitor pricing and receive real-time market intelligence without manual data collection, allowing pricing teams to make faster, data-driven decisions.

Key Takeaways

- Consider implementing automated price monitoring if your team currently tracks competitor prices manually through spreadsheets or web browsing

- Explore Amazon Nova Act for building custom automation workflows that require web data extraction and competitive intelligence gathering

- Evaluate whether real-time pricing alerts could accelerate your pricing decision cycles and improve market responsiveness

Source: AWS Machine Learning Blog

research

spreadsheets

planning

Productivity & Automation

Addepar, a wealth management platform, demonstrates how financial services firms can use Databricks AI agents to automate complex investment workflows like portfolio analysis and client reporting. The case study shows practical implementation of AI agents that query data, generate insights, and produce client-ready documents—reducing manual work that typically takes analysts hours to complete.

Key Takeaways

- Consider implementing AI agents for repetitive analytical tasks in your organization, particularly those involving data queries and report generation that currently consume significant analyst time

- Evaluate unified data platforms that combine your existing data infrastructure with AI capabilities, rather than bolting AI onto fragmented systems

- Watch for opportunities to automate multi-step workflows where AI agents can chain together data retrieval, analysis, and document creation tasks

Source: Databricks Blog

research

documents

spreadsheets

Productivity & Automation

ParetoBandit is an open-source system that automatically routes requests across multiple AI models (like GPT-4, Claude, or smaller alternatives) while staying within budget constraints. It adapts in real-time to price changes, quality shifts, and new model releases, helping organizations optimize their AI spending without manual intervention or service interruptions.

Key Takeaways

- Consider implementing multi-model routing if you're spending significantly on AI APIs—this approach can maintain quality while reducing costs by automatically selecting cheaper models when appropriate

- Monitor for silent quality regressions in your AI providers, as this system demonstrates models can degrade over time without notice and automated detection is possible

- Evaluate budget-aware routing solutions when managing teams with AI access, as they enforce cost ceilings per request rather than requiring manual oversight

Source: arXiv - Machine Learning

planning

Productivity & Automation

New research proposes separating AI decision-making (when to answer, retrieve info, or use tools) from content generation, making AI systems more transparent and easier to troubleshoot. This architectural approach helps identify why an AI system failed—whether it misread the situation, made a poor decision, or executed incorrectly—enabling faster fixes and more reliable workflows.

Key Takeaways

- Evaluate your AI tools for transparency in decision-making—systems that clearly show why they chose to search, escalate, or answer directly are easier to trust and debug

- Watch for AI systems that separate 'what to do' from 'how to do it'—this architecture makes it easier to customize behavior without retraining entire models

- Consider implementing explicit decision checkpoints in multi-step AI workflows where you can review and override choices before execution

Source: arXiv - Artificial Intelligence

planning

research

communication

Productivity & Automation

OpenTools is a new open-source framework that addresses AI agent reliability by standardizing how tools work and measuring their accuracy. The platform enables community testing and monitoring of AI tools, showing 6-22% performance improvements when using higher-quality, tested tools. This matters for professionals because unreliable AI tools can fail not just from poor AI decisions, but from the tools themselves being inaccurate.

Key Takeaways

- Evaluate AI tools for intrinsic accuracy before integrating them into workflows—the tool itself may be the weak link, not the AI using it

- Consider community-tested tools over untested alternatives when building AI agent workflows, as verified tools show measurable performance gains

- Monitor tool reliability continuously rather than assuming once-working integrations remain stable over time

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

Holo3 is a new open-source AI model that can control computer interfaces by viewing screens and executing actions like clicking, typing, and navigating applications. This represents a significant step toward AI agents that can automate complex multi-step workflows across different software tools, potentially reducing repetitive tasks in business operations.

Key Takeaways

- Monitor Holo3's development as it could automate repetitive cross-application tasks like data entry, report generation, or software testing that currently require manual clicking and typing

- Consider how computer-control AI models might integrate with your existing workflow automation tools to handle tasks that span multiple applications

- Evaluate potential use cases where AI-driven computer control could reduce time spent on routine administrative tasks across different software platforms

Source: Hugging Face Blog

planning

documents

spreadsheets

Productivity & Automation

Elgato's Stream Deck 7.4 update adds Model Context Protocol support, enabling AI assistants like Claude, ChatGPT, and Nvidia G-Assist to automatically trigger Stream Deck buttons and macros. This allows professionals to verbally command their AI assistant to execute complex workflows—like launching applications, switching scenes, or running multi-step automations—without manual button presses.

Key Takeaways

- Explore using AI voice commands to trigger Stream Deck macros for repetitive tasks like opening project files, launching application sets, or switching between work contexts

- Consider integrating this with existing AI assistants you already use (Claude, ChatGPT) to create hands-free workflow automation for presentations, meetings, or content creation

- Evaluate whether Stream Deck's physical button interface combined with AI control could replace multiple software shortcuts in your daily routine

Source: The Verge - AI

meetings

presentations

communication

Productivity & Automation

Research shows that AI-based evaluation systems need surprisingly few reviewers to get reliable quality scores, but discovering edge cases requires larger panels. When evaluating AI outputs or chatbots, small diverse review panels (3-5 evaluators with different perspectives) can provide trustworthy quality assessments, though catching rare issues demands more extensive testing.

Key Takeaways

- Use small diverse review panels (3-5 people with different backgrounds) when evaluating AI chatbot or agent performance—research confirms this matches human judgment reliability

- Expect diminishing returns when expanding evaluation teams: quality scores plateau quickly, but finding edge cases requires progressively more reviewers

- Structure your AI testing with varied personas or perspectives rather than generic prompts to uncover a wider range of potential issues

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

Researchers have developed a cost-effective method to identify which AI agent interactions are worth reviewing when improving deployed systems. Instead of manually reviewing every interaction or using expensive LLM-based analysis, this framework uses lightweight signals to flag problematic patterns like loops, failures, or user disengagement—achieving 82% accuracy while reducing review costs significantly.

Key Takeaways

- Monitor your AI agent deployments for specific failure patterns like infinite loops, task stagnation, and user disengagement rather than reviewing every interaction

- Consider implementing lightweight tracking signals that don't require additional LLM calls to identify which agent interactions need human review

- Prioritize reviewing agent trajectories flagged by multiple signals (misalignment, execution failures, resource exhaustion) to maximize improvement efforts

Source: arXiv - Artificial Intelligence

planning

research

Productivity & Automation

This article discusses the 'capability curse' where high-performing employees become overburdened because they're consistently assigned the most complex tasks. For professionals using AI, this highlights an opportunity: AI tools can help redistribute workload by making complex tasks more accessible to broader team members, reducing dependency on a few 'go-to' people.

Key Takeaways

- Identify tasks you're repeatedly asked to handle that could be automated or simplified with AI tools

- Document your processes using AI assistants to make your expertise more accessible to colleagues

- Delegate routine complex tasks by creating AI-powered templates or workflows others can use

Source: Fast Company

planning

documents

communication

Productivity & Automation

This article addresses leadership communication pitfalls that become more pronounced as professionals gain authority—highly relevant for those using AI tools to amplify their voice through automated communications, presentations, or content creation. Understanding these traps helps prevent AI-assisted messages from appearing tone-deaf or overly authoritative. The insights apply directly to crafting prompts and reviewing AI-generated content that represents your professional brand.

Key Takeaways

- Review AI-generated communications for unintended authority signals that may shut down dialogue or collaboration

- Adjust your prompts to ensure AI tools maintain approachability even when drafting formal business content

- Monitor how AI-assisted presentations or emails might amplify executive presence in ways that create distance from your team

Source: Harvard Business Review

email

communication

presentations

documents

Productivity & Automation

AI agent labs are developing frameworks to match different types of workloads (varying in volume, value, and time sensitivity) with appropriate AI solutions—determining when to invest in custom training versus using off-the-shelf agent tools. Understanding these workload classifications helps businesses identify which tasks justify significant AI investment versus simpler automation approaches.

Key Takeaways

- Evaluate your AI workloads by volume, business value, and time sensitivity before choosing between custom solutions and pre-built agent tools

- Consider the actual execution costs of AI agents for your specific tasks—not all workloads justify expensive custom training

- Match high-value, high-volume tasks to more sophisticated AI solutions while using simpler automation for routine work